NVIDIA, AI and Deep Learning – what you need to know

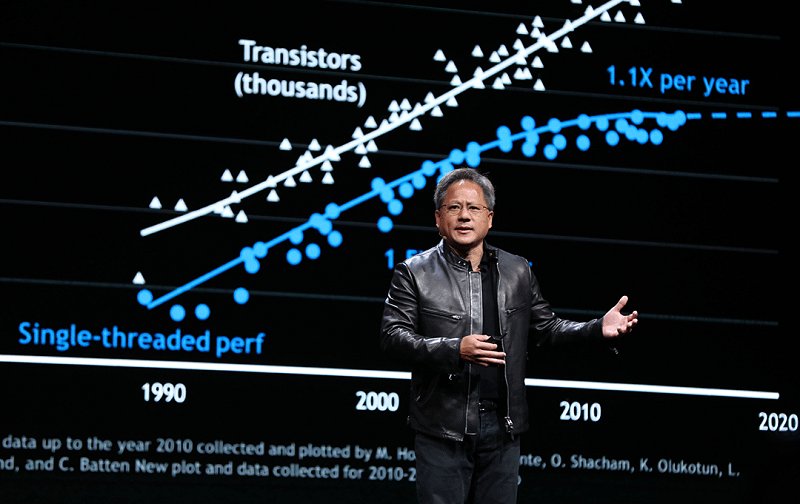

Jen-Hsun Huang addressing the future on stage during GTC 2017

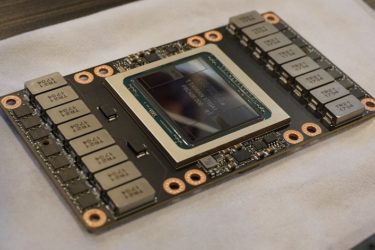

NVIDIA have really shaken things up with the announcement of their Volta architecture and in particular their massive V100 chip – the most powerful graphics processor in the world today, built with the intent to handle the most intensive high-power computing tasks, artificial intelligence, and graphics-related workloads. Also, going into the most powerful upcoming Supercomputer – Summit. Compared to its predecessor, the GP100, NVIDIA’s new GV100 is in many ways unrecognizable. Built using an improved lithography process, namely TSMC’s 12nm FFN NVIDIA have managed to cram 5, 120 CUDA cores along with the new Tensor cores for specific workloads. Combined with the various improvements to the architecture and most importantly the way it utilizes resources, Volta is a much more efficient and more powerful architecture compared to Pascal, which we shouldn’t forget was exceptional in and of itself.

Check out all the available NVIDIA (Pascal and earlier) products and their prices here

Some of the GV100 GPU characteristics are: it features 21.1 billion transistors, a die size of the staggering 815 mm2, making it one of the, if not the largest silicon chips ever produced, an improved NVlink interface and 16GB’s of HBM2 (high-bandwidth memory).

Take a look at that chip

Furthermore, during their very own GPU Technology Conference (GTC) 2017, Jen-Hsun Huang, the CEO of NVIDIA at one point began talking about the strategies employed by him and his company and how it’s helped them achieve this much progress over the years and especially the measures they’ve taken with of the imminent downfall of Moore’s Law in mind (Moore’s law is the observation that the number of transistors in a dense integrated circuit doubles approximately every two years). With chips becoming increasingly complex, all the while shrinking in size, physics simply can’t deal with the new obstacles that are showing up in the lithography processes. Extracting more performance is growing increasingly difficult, as you can’t simpy scale up anymore. Any forward-thinking technology companies need to develop their own ways of moving forward in such an environment.

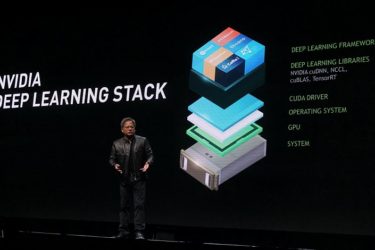

Jen-Hsun said that they are doing a few things to help with that, but what his main point was, was that NVIDIA is working closely with developers and companies from all over the world to create libraries which not only work great on the software level since they receive full-time optimizations from the NVIDIA specialists but then they integrate these optimizations with what today’s requirements are in mind on a hardware level too, creating a very effective communications loop. Between what is needed, what is present and what needs to change they have put an equal focus on all of those. That, in turn, allows everyone to take full advantage of the currently available hardware and giving easy access to various developer platforms.

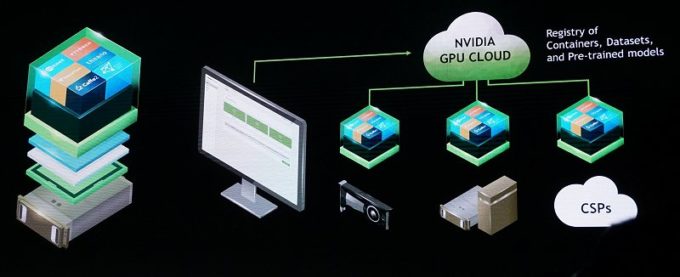

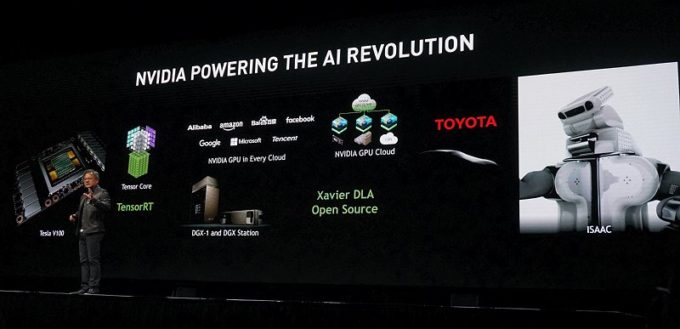

T hat makes for purpose built, optimized and integrated, convenient for the workflow, versatile so that it runs anywhere in the world and easy to use data sets. What better way to position your technology to the world? The world has a need for efficiency, and NVIDIA is bringing together those who can make use of it. On their most successful GTC yet, Jen-Hsun had over 15 of the biggest automotive companies present when he revealed the V100 accelerator, but an accelerator without the software to back it up is moot, so what else did they do? They announced the GPU Cloud, a cloud platform optimized for deep learning, which has a beta version coming in July. What’s good about this platform is that not only does it allow AI developers to build a smarter world, but you can do your best work using the latest technology of accelerated computing wherever you are in the world, taking advantage of the cloud, no questions asked.

hat makes for purpose built, optimized and integrated, convenient for the workflow, versatile so that it runs anywhere in the world and easy to use data sets. What better way to position your technology to the world? The world has a need for efficiency, and NVIDIA is bringing together those who can make use of it. On their most successful GTC yet, Jen-Hsun had over 15 of the biggest automotive companies present when he revealed the V100 accelerator, but an accelerator without the software to back it up is moot, so what else did they do? They announced the GPU Cloud, a cloud platform optimized for deep learning, which has a beta version coming in July. What’s good about this platform is that not only does it allow AI developers to build a smarter world, but you can do your best work using the latest technology of accelerated computing wherever you are in the world, taking advantage of the cloud, no questions asked.

Check it out:

Other than that, well, they improved on the design of their DGX-1 supercomputer by equipping it with 8 Volta V100 accelerators, bumped the price up to $149,000 and also added the DGX Station to their lineup for half the price. Both those computers serve as essential instruments of AI research today. NVIDIA isn’t only building the hardware however, there are other companies who can do just that. They are differentiating themselves by providing the required software stack in their products to get them to work immediately, on day one and give software support to navigate the realms of deep learning – that, according to Jen-Hsun, is just as important if not more so than providing the hardware. While the DGX-1 is more expensive than the Pascal counterpart it replaced, and by $20,000 at that the price seems justified given the increase in performance. And after all, developing Volta cost billions and the dear investment needs to be justified.

The DGX Station

NVIDIA calls its DGX Station the “Personal AI Supercomputer”. It has a higher capacity of performing operations than its much more expensive, albeit EOL’d, predecessor, the Pascal DGX-1, more than twice larger in fact – when compared by TFLOPS performance, one is 170TFLOPS and the other – 480 TFLOPS. Its price is lower, however, at $69,000.

NVIDIA calls its DGX Station the “Personal AI Supercomputer”. It has a higher capacity of performing operations than its much more expensive, albeit EOL’d, predecessor, the Pascal DGX-1, more than twice larger in fact – when compared by TFLOPS performance, one is 170TFLOPS and the other – 480 TFLOPS. Its price is lower, however, at $69,000.

Anyway, we keep mentioning AI and Deep Learning but what exactly is it? In a nutshell, it’s what serves to make our everyday lives easier, from the suggestions you get as you are typing into a search engine, to the ability of social networks to recognize who you are in photos and apply tags. AI is easily compared to some sci-fi notion, where machines are able to identify information by extracting raw data from sensors for temperature, speed, pressure and others around the world. That is also why the Internet of Things is moving at such a fast past. Various language translations, being able to make out what speed an object is traveling at, the ability robots have to teach other robots, that’s all also AI.

To be on the forefront of such an important time in human history, NVIDIA has undertaken the mighty task of creating the most appropriate platforms for deep learning and AI developers. To push forward with that goal, a few goals are in focus. They keep an eye on start-ups which have in mind the advancement of data science and AI in industries. They are aiming to provide GPU-services through using the cloud, so you don’t have to own the GPU to make use of what it can offer. They are making the hardware, providing the framework and making strides with their Deep Learning Neural Network library to fine tune DNN routines. Their past and where they currently stand puts them in a very favorable junction because with their GPU IP, their unified device architecture (CUDA, which Jen-Hsun put an emphasis on during GTC) for parallel computing operations have lead us today to the previously mentioned data processing, deep learning and the most recent goal focusing on the improvement of driving AI.

Some of the highlights of this driving AI is, for example, NVIDIA’s smart city vision: AI police cars, AI trucks and AI robots all working together and combining the various aspects of AI to help mold today’s city into the modern town of tomorrow. An example of this involvement would be their partnership with trucking giant Paccar. What the partnership aims to achieve is making routine driving operations a possibility without having a human element involved. How? By using the DRIVE PX 2 – a compact in-vehicle supercomputer designed to aid such “licenceless” driving.

Through their collaboration with universities across the world, NVIDIA aims to expand the curriculum and by targeting developers, researchers, and scientists, and giving them practical training and familiarizing them with their platforms, they are hoping to expand their tools and technology. Something we haven’t mentioned is how they’re providing practical information through certified instructors from HP, IBM, Microsoft and others. All of this is coordinated by the established in 2016 Deep Learning Institute, or DLI for short. Net, and TensorFlow.

On top of all the work to familiarize developers with the tools, NVIDIA is aiming to provide training labs for Deep Learning through their partnerships with Facebook, Google, Amazon, universities such as Stanford and also the communities which support such an endeavor and are willing to help co-develop the training labs with needed frameworks.

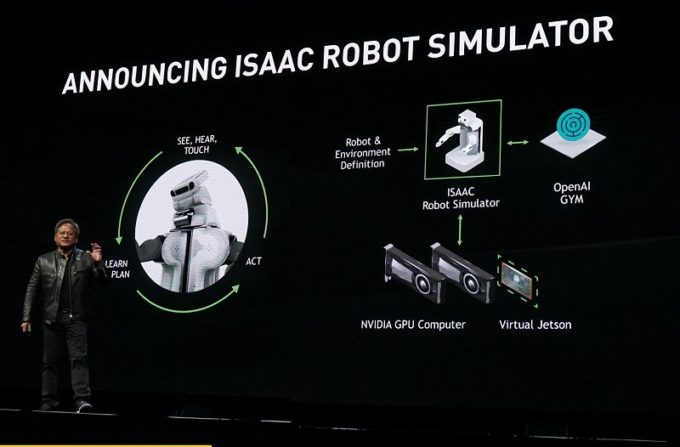

Earlier we mentioned robots training robots. Well, building and training them poses significant challenges. To tackle those challenges, NVIDIA has released their ISAAC Robot Simulator, their very first such platform. What it does is it allows robots to very precisely, safely and more rapidly gain expertise in various fields through simulation of real world tasks. You can’t have a robot operate on a patient given how his life’s at stake, but you can simulate the complicated task of performing the surgery by shadowing the performance of the human originator, in this case a surgeon – and that’s key to a safer and faster AI evolution. Evolving robots in a virtual environment.

This is a huge statement made by NVIDIA. They are not going to rest, they are enthusiastic about the future, they are spending huge resources to make the future of tomorrow come today.

Check out all the available NVIDIA products and their prices here